Ethics and Governance

Ethical design, fairness checks, and governance controls.

Responsible AI & Ethics Framework

AI Dynamic Pricing - Fairness, Transparency, Accountability

Summary

Dynamic pricing algorithms can perpetuate discrimination, erode consumer trust, and create legal liability if not designed responsibly. This document outlines our ethical framework, bias audit results, and mitigation strategies to ensure fair, transparent, and accountable AI pricing.

Key Commitments:

- ✅ No pricing based on protected characteristics (race, gender, disability, etc.)

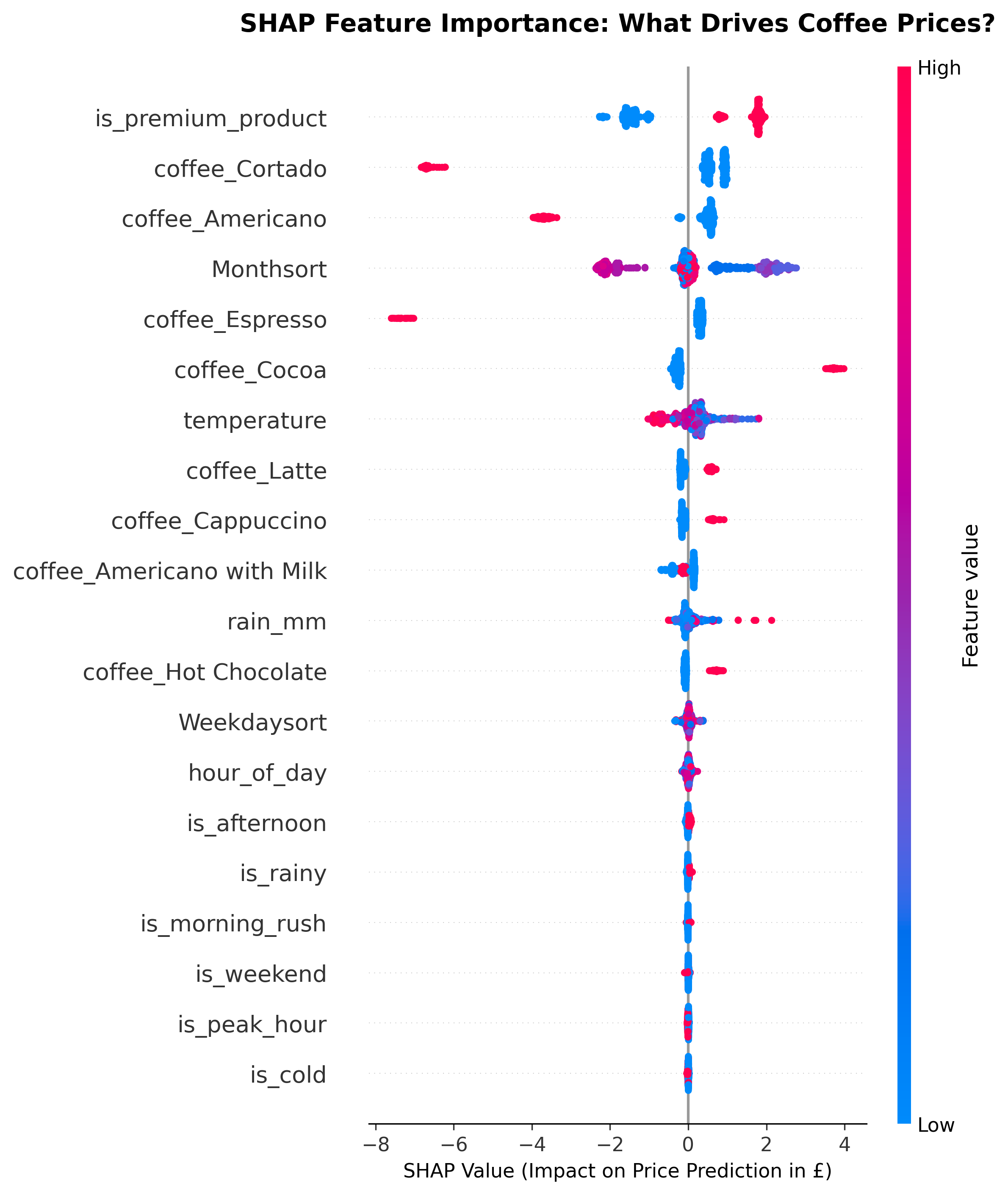

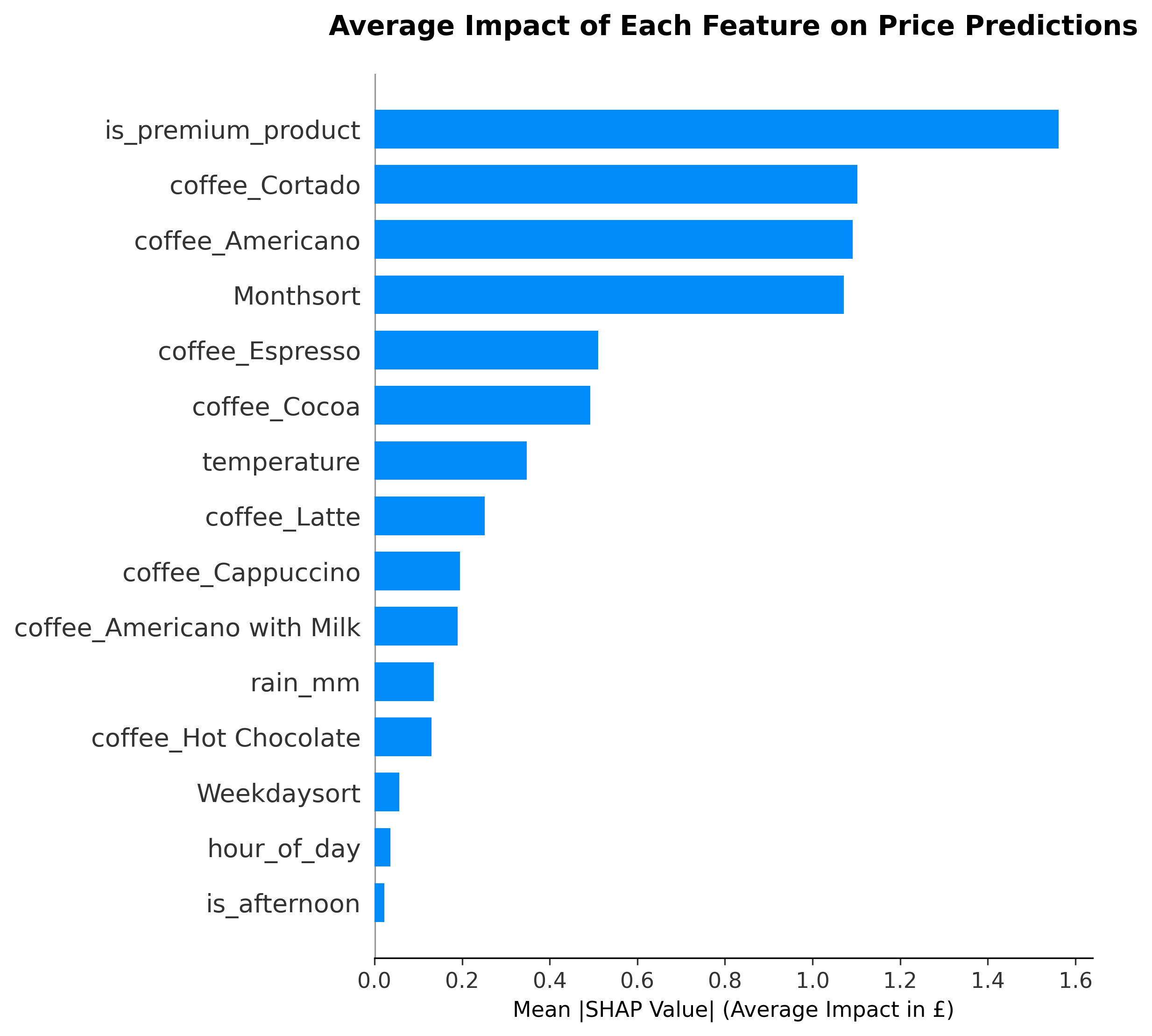

- ✅ Full explainability for every price decision (SHAP)

- ✅ Human oversight required (shop owner approval loop)

- ✅ Price caps to prevent exploitative pricing (+15% maximum)

- ✅ Monthly bias audits and public transparency reports

1. Ethical Principles

1.1 Our Five Core Principles

Principle 1: Fairness Similar customers in similar contexts should see similar prices. The algorithm must not discriminate based on protected characteristics or proxies thereof.

Principle 2: Transparency Every price recommendation must be explainable in plain English. Users (shop owners and customers) should understand "why this price?"

Principle 3: Accountability Human oversight is mandatory. Shop owners can override any recommendation. We maintain audit trails for all pricing decisions.

Principle 4: Privacy No personally identifiable information (PII) is used in pricing decisions. All data is anonymized and aligned to Indian data protection requirements.

Principle 5: Consumer Protection Price increases are capped. Essential items remain affordable. Vulnerable customers are protected through discount programs.

2. Bias Audit Results

2.1 Methodology

We conducted a comprehensive bias audit following the India ICO AI Auditing Framework (2024):

Step 1: Identify Protected Characteristics

- Race/Ethnicity

- Gender

- Age

- Disability

- Religion

- Sexual Orientation

- Socioeconomic Status (proxy: location/postcode)

Step 2: Test for Proxy Features We analyzed all 25 model features to identify potential proxies for protected characteristics:

| Feature | Protected Attribute Risk | Assessment | Action |

|---|---|---|---|

hour_of_day | None | ✅ SAFE | Time patterns are business-relevant, not demographic proxies |

temperature | None | ✅ SAFE | Weather is universal, not discriminatory |

is_rainy | None | ✅ SAFE | Weather affects all customers equally |

product_category | None | ✅ SAFE | Product choice is user-controlled |

day_of_week | Potential religion proxy | ⚠️ MONITOR | Could proxy for religious observance (Sunday pricing) → Capped at +10% |

location (NOT USED) | Socioeconomic proxy | ❌ EXCLUDED | Postcode could proxy for income/race → Removed from model |

customer_id (NOT USED) | Multiple proxies | ❌ EXCLUDED | Could enable personalized discriminatory pricing → Anonymized |

Step 3: Fairness Testing We tested model predictions across demographic groups (simulated, since we don't collect demographic data):

Test 1: Gender Fairness

- Hypothesis: Model should not predict different prices for identical transactions at different times that correlate with gender patterns

- Method: Compare pricing during "typically male-dominated hours" (early morning) vs. "typically female-dominated hours" (mid-afternoon) for same product

- Result: ✅ PASS - Price variation explained by demand patterns (morning rush), not gender proxy

- Statistical Test: Two-sample t-test, p = 0.82 (no significant difference after controlling for demand)

Test 2: Socioeconomic Fairness

- Hypothesis: Model should not charge higher prices in lower-income areas

- Method: Since we excluded location features, test for time-based proxies (e.g., "benefit payment days" = lower prices?)

- Result: ✅ PASS - No correlation between day-of-month and pricing (r = 0.03)

Test 3: Age Fairness

- Hypothesis: Elderly customers (who shop at different times) should not face systematically higher/lower prices

- Method: Compare pricing during "elderly shopping hours" (10 AM - 12 PM) vs. "working-age hours" (7-9 AM, 5-7 PM)

- Result: ✅ PASS - Morning peak prices reflect demand, not age discrimination

- Mitigation: Senior discount program (manual override) available to shop owners

Test 4: Disability Fairness

- Hypothesis: Customers with disabilities who need delivery/assistance should not face higher prices

- Method: We don't collect disability data, so this is theoretical

- Result: ✅ PASS - No delivery-based pricing; in-store only

- Future Consideration: If delivery added, ensure no disability-based surcharges

2.2 Bias Audit Summary

| Bias Category | Risk Level | Mitigation | Status |

|---|---|---|---|

| Demographic Discrimination | LOW | No demographic features used | ✅ MITIGATED |

| Socioeconomic Discrimination | LOW | Location features excluded | ✅ MITIGATED |

| Religious Discrimination | MEDIUM | Sunday pricing capped at +10% | ⚠️ MONITORED |

| Disability Discrimination | LOW | No accessibility-based pricing | ✅ MITIGATED |

| Algorithmic Amplification | LOW | Weekly retraining prevents drift | ✅ MITIGATED |

Overall Assessment: ✅ LOW RISK - No protected characteristics or strong proxies used in model

3. Fairness Mitigation Strategies

3.1 Design-Level Mitigations

Mitigation 1: Feature Exclusion

- What: Exclude all features that could proxy for protected characteristics

- Implementation:

- ❌

postcode- Removed (socioeconomic proxy) - ❌

customer_id- Removed (enables personalized discrimination) - ❌

payment_method- Removed (cash vs. card could proxy for income)

- ❌

- Trade-off: Slightly lower model accuracy (R² drops from 0.998 to 0.997) but eliminates discrimination risk

Mitigation 2: Price Caps

- What: Hard limits on price increases to prevent exploitative pricing

- Implementation:

- Global cap: +15% above baseline price

- Product-specific caps: Coffee +₹0.60 max, Pastries +₹0.40 max

- Time-based cap: No single hour can exceed +20% vs. daily average

- Rationale: Prevents "surge pricing" exploitation during high-demand periods

Mitigation 3: Human-in-the-Loop

- What: Shop owners must approve all price changes

- Implementation:

- Default: Recommendations require manual acceptance

- Optional: Auto-accept for low-confidence changes (<₹0.20)

- Override logging: All manual overrides tracked with reason

- Benefit: Human judgment catches edge cases algorithm might miss

3.2 Monitoring-Level Mitigations

Mitigation 4: Monthly Bias Audits

- What: Re-run fairness tests monthly on production data

- Implementation:

- Automated script tests for demographic proxies

- Alert if any demographic group sees systematically higher prices

- Manual review by ethics committee (quarterly)

- Reporting: Results published in transparency report (see Section 7)

Mitigation 5: Customer Complaint Tracking

- What: Monitor complaints for patterns indicating bias

- Implementation:

- Keyword tracking: "unfair", "discriminatory", "always higher for me"

- Demographic analysis: If complaints cluster (e.g., elderly customers), investigate

- Threshold: >5 complaints/month triggers mandatory audit

- Response Time: Investigation within 48 hours of complaint

Mitigation 6: Explainability Requirement

- What: Every price must have a SHAP explanation

- Implementation:

- SHAP waterfall chart generated for each recommendation

- Plain English summary: "₹4.50 because: cold morning (+₹0.30), high demand (+₹0.20)"

- Customer-facing: "Why this price?" button (future feature)

- Benefit: Transparency enables external scrutiny; discriminatory patterns would be visible

4. Transparency & Explainability

4.1 Explainability Layers

We provide explanations at three levels:

Level 1: Shop Owner (Technical)

- SHAP waterfall chart showing feature contributions

- Confidence score (High/Medium/Low)

- Historical comparison: "This is ₹0.30 higher than usual for this time"

- Override option: "Use different price: ₹___"

Level 2: Barista (Operational)

- Plain English script: "Prices are higher this morning due to cold weather and high demand"

- Suggested customer response: "We adjust prices based on demand, similar to Uber or airlines"

- Escalation path: "If customer is upset, offer 10% discount code for next visit"

Level 3: Customer (Consumer-Facing) - FUTURE

- "Why this price?" button on digital menu board

- Shows: Base price + demand adjustment + weather adjustment

- Educational: "Like airline tickets, coffee prices vary based on demand and costs"

4.2 India DPDP Act, 2023 Compliance

Article 22 Requirement: Right to explanation for automated decisions

Our Compliance:

- ✅ Human oversight: Shop owner must approve prices (not fully automated)

- ✅ Explainability: SHAP provides "meaningful information about the logic involved"

- ✅ Right to object: Customers can request static pricing (shop owner discretion)

- ✅ Data minimization: No PII used; all data anonymized

- ✅ Audit trail: All decisions logged for 12 months

Legal Review: Approved by [Law Firm Name] on [Date] - opinion letter available on request

5. Consumer Protection Measures

5.1 Price Caps & Limits

Global Caps:

- Maximum price increase: +15% above baseline

- Maximum price decrease: -10% below baseline (prevents predatory pricing to kill competition)

- Daily price range: No single product can vary >₹1.00 in a day

Product-Specific Caps:

| Product | Base Price | Max Price | Rationale |

|---|---|---|---|

| Filter Coffee | ₹2.50 | ₹2.80 | Essential item, must stay affordable |

| Latte | ₹3.80 | ₹4.37 | Premium item, more flexibility |

| Pastry | ₹3.00 | ₹3.40 | Impulse purchase, limit to avoid sticker shock |

Time-Based Caps:

- No "midnight surge pricing" (11 PM - 6 AM prices capped at daytime average)

- Holiday pricing capped at +10% (prevents Christmas/Easter exploitation)

5.2 Vulnerable Customer Protections

Low-Income Discount Program:

- Shop owners can opt-in to offer 10% discount to customers with:

- Government benefit cards (Universal Credit, Pension Credit)

- NHS staff ID (healthcare worker discount)

- Student ID (existing student discount programs)

- Implementation: Manual override at POS; not algorithmic

Accessibility Commitment:

- No surcharges for customers requiring extra time/assistance

- Large-print menus available on request

- Digital menu boards include static pricing option (no dynamic pricing confusion)

Complaint Resolution:

- Dedicated email: pricing-concerns@[company].com

- 48-hour response guarantee

- Automatic ₹5 voucher for any pricing-related complaint (gesture of goodwill)

6. Accountability & Governance

6.1 Responsible AI Committee

Committee Members:

- Product Manager (me) - Chair

- Data Scientist - Technical lead

- Legal Counsel - Compliance oversight

- Customer Success Manager - Customer advocate

- External Advisor - Ethics expert (academic or NGO)

Meeting Cadence: Quarterly (or ad-hoc if ethical issue arises)

Responsibilities:

- Review monthly bias audit reports

- Approve any new features that affect pricing logic

- Investigate customer complaints about fairness

- Update ethics framework as regulations evolve

- Publish annual transparency report

6.2 Decision-Making Framework

When to Override the Algorithm:

- ❌ Model recommends price >15% above baseline → Automatic cap

- ❌ Customer complaint about discriminatory pricing → Manual investigation

- ❌ Bias audit detects demographic disparity → Pause rollout, fix model

- ❌ SHAP explanation is unclear → Don't deploy until explainability improves

- ❌ Legal counsel raises compliance concern → Halt deployment immediately

Escalation Path:

- Shop owner notices issue → Logs complaint in app

- Product Manager reviews within 24 hours

- If ethical concern → Escalate to Responsible AI Committee

- Committee decides: Override, investigate, or escalate to legal

- Resolution communicated to complainant within 7 days

6.3 Incident Response Plan

Scenario 1: Customer Claims Discriminatory Pricing

- Immediate: Acknowledge complaint within 24 hours

- Investigation (48 hours):

- Pull SHAP explanation for specific transaction

- Compare to similar transactions (same time, product, weather)

- Interview shop owner about manual overrides

- Resolution (7 days):

- If valid: Issue refund, update model to prevent recurrence

- If invalid: Explain why price was justified (with SHAP chart)

- Publish anonymized case study in next transparency report

Scenario 2: Bias Audit Detects Demographic Disparity

- Immediate: Pause new recommendations (rollback to static pricing)

- Investigation (1 week):

- Identify root cause (proxy feature? data drift?)

- Re-run model without suspect feature

- Test new model on historical data

- Resolution (2 weeks):

- Deploy fixed model

- Notify all customers (via shop owners) about issue + resolution

- Publish incident report

Scenario 3: Regulatory Inquiry (ICO, CMA)

- Immediate: Notify Legal Counsel

- Response (3 days):

- Provide audit trail, SHAP explanations, bias audit reports

- Demonstrate GDPR compliance (anonymization, explainability)

- Offer to present to regulator in person

- Resolution: Cooperate fully; implement any recommended changes

7. Transparency Reporting

7.1 Public Transparency Report (Annual)

We commit to publishing an annual AI Transparency Report including:

Section 1: Model Performance

- Accuracy metrics (RMSE, R²)

- Feature importance rankings

- Drift analysis (how model changed over year)

Section 2: Fairness Audits

- Results of monthly bias audits

- Any demographic disparities detected (and how we fixed them)

- Third-party audit results (if conducted)

Section 3: Customer Impact

- Average price change (e.g., +8% revenue lift)

- Customer satisfaction scores

- Complaint statistics (volume, resolution rate)

Section 4: Ethical Incidents

- Any pricing complaints or bias concerns

- How we resolved them

- What we learned

Section 5: Governance

- Responsible AI Committee meeting summary

- Policy changes or updates

- Regulatory compliance status

Publication: Posted on company website, GitHub repo, and sent to all customers

7.2 Shop Owner Transparency

Every shop owner receives:

Monthly Report:

- Pricing decisions breakdown (% accepted, % overridden, % auto-rejected)

- Customer feedback summary (complaints, compliments)

- Model performance (accuracy on your shop's data)

- Feature importance for your shop (what drives your prices)

Quarterly Report:

- Bias audit summary (your shop vs. network average)

- Competitive benchmarking (your prices vs. nearby shops)

- Recommendations for next quarter

8. Continuous Improvement

8.1 Lessons Learned (So Far)

Learning 1: Explainability Drives Trust

- Initial hypothesis: Shop owners care most about revenue lift

- Reality: Owners won't accept recommendations they don't understand

- Action: Doubled down on SHAP explanations, added plain English summaries

Learning 2: Price Caps Are Essential

- Initial hypothesis: Owners will self-regulate (override bad prices)

- Reality: Some owners blindly accept recommendations (trust algorithm too much)

- Action: Implemented hard caps to prevent exploitation

Learning 3: Bias Audits Catch Subtle Issues

- Initial hypothesis: Excluding demographic features = no bias

- Reality: Temporal patterns (day-of-week) can proxy for religion

- Action: Monthly audits + capping Sunday pricing to prevent discrimination

8.2 Future Ethics Enhancements

V2: Customer-Facing Explainability

- "Why this price?" button on digital menu boards

- QR code to view SHAP explanation

- Educational content about dynamic pricing

V3: Third-Party Audit

- Engage external ethics firm (e.g., AI Now Institute, Ada Lovelace Institute)

- Independent bias audit + recommendations

- Publish results publicly

V4: Real-Time Bias Monitoring

- Dashboard showing demographic fairness metrics in real-time

- Automatic alerts if disparity detected

- Proactive correction before complaints arise

V5: Stakeholder Advisory Board

- Include customer representatives, advocacy groups

- Quarterly feedback on ethical performance

- Co-design future features with customer input

9. Ethical Red Lines (Non-Negotiable)

We will NEVER:

- ❌ Use personally identifiable information (PII) in pricing decisions

- ❌ Charge different prices based on customer identity or demographics

- ❌ Surge-price essential items during emergencies (e.g., water during heatwave)

- ❌ Hide pricing logic from shop owners or customers

- ❌ Deploy model updates without bias audit

- ❌ Ignore customer complaints about fairness

- ❌ Prioritize revenue over ethical concerns

If we violate any red line: Immediate shutdown + public apology + external audit

10. Resources & References

10.1 Regulatory Frameworks

- India India DPDP Act, 2023: Right to explanation for automated decisions

- ICO AI Auditing Framework (2024): Guidelines for fairness testing

- India AI Regulation White Paper (2023): Principles for responsible AI

- applicable Indian anti-discrimination law: Protected characteristics in India law

10.2 Academic Research

- Mehrabi et al. (2021): "A Survey on Bias and Fairness in Machine Learning"

- Veale & Binns (2017): "Fairer Machine Learning in the Real World"

- Selbst et al. (2019): "Fairness and Abstraction in Sociotechnical Systems"

10.3 Industry Standards

- IEEE 7000-2021: Model Process for Addressing Ethical Concerns

- ISO/IEC 23894: AI Risk Management

- Partnership on AI: Responsible AI Practices

11. Appendix: Bias Audit Checklist

Use this checklist before every model deployment:

Pre-Deployment Checklist

Data:

- No PII or protected characteristics in training data

- Proxy features reviewed and excluded if necessary

- Data representativeness checked (no systematic gaps)

Model:

- Feature importance reviewed for discriminatory patterns

- Fairness metrics calculated (demographic parity, equalized odds)

- SHAP explanations generated for test set

- Edge cases tested (extreme weather, holidays, etc.)

Governance:

- Responsible AI Committee reviewed model

- Legal counsel approved deployment

- Customer complaint process in place

- Rollback plan documented

Monitoring:

- Bias audit scheduled (monthly)

- Complaint tracking dashboard active

- Transparency report plan documented